Get 69% Off on Cloud Hosting : Claim Your Offer Now!

- Products

-

Liquid Cooled Data Center

Liquid Cooled Data Center

-

Compute

Compute

- Predefined TemplatesChoose from a library of predefined templates to deploy virtual machines!

- Custom TemplatesUse Cyfuture Cloud custom templates to create new VMs in a cloud computing environment

- Spot Machines/ Machines on Flex ModelAffordable compute instances suitable for batch jobs and fault-tolerant workloads.

- Shielded ComputingProtect enterprise workloads from threats like remote attacks, privilege escalation, and malicious insiders with Shielded Computing

- GPU CloudGet access to graphics processing units (GPUs) through a Cyfuture cloud infrastructure

- vAppsHost applications and services, or create a test or development environment with Cyfuture Cloud vApps, powered by VMware

- Serverless ComputingNo need to worry about provisioning or managing servers, switch to Serverless Computing with Cyfuture Cloud

- HPCHigh-Performance Computing

- BaremetalBare metal refers to a type of cloud computing service that provides access to dedicated physical servers, rather than virtualized servers.

-

Storage

Storage

- Standard StorageGet access to low-latency access to data and a high level of reliability with Cyfuture Cloud standard storage service

- Nearline StorageStore data at a lower cost without compromising on the level of availability with Nearline

- Coldline StorageStore infrequently used data at low cost with Cyfuture Cloud coldline storage

- Archival StorageStore data in a long-term, durable manner with Cyfuture Cloud archival storage service

-

Database

Database

- MS SQLStore and manage a wide range of applications with Cyfuture Cloud MS SQL

- MariaDBStore and manage data with the cloud with enhanced speed and reliability

- MongoDBNow, store and manage large amounts of data in the cloud with Cyfuture Cloud MongoDB

- Redis CacheStore and retrieve large amounts of data quickly with Cyfuture Cloud Redis Cache

-

Automation

Automation

-

Containers

Containers

- KubernetesNow deploy and manage your applications more efficiently and effectively with the Cyfuture Cloud Kubernetes service

- MicroservicesDesign a cloud application that is multilingual, easily scalable, easy to maintain and deploy, highly available, and minimizes failures using Cyfuture Cloud microservices

-

Operations

Operations

- Real-time Monitoring & Logging ServicesMonitor & track the performance of your applications with real-time monitoring & logging services offered by Cyfuture Cloud

- Infra-maintenance & OptimizationEnsure that your organization is functioning properly with Cyfuture Cloud

- Application Performance ServiceOptimize the performance of your applications over cloud with us

- Database Performance ServiceOptimize the performance of databases over the cloud with us

- Security Managed ServiceProtect your systems and data from security threats with us!

- Back-up As a ServiceStore and manage backups of data in the cloud with Cyfuture Cloud Backup as a Service

- Data Back-up & RestoreStore and manage backups of your data in the cloud with us

- Remote Back-upStore and manage backups in the cloud with remote backup service with Cyfuture Cloud

- Disaster RecoveryStore copies of your data and applications in the cloud and use them to recover in the event of a disaster with the disaster recovery service offered by us

-

Networking

Networking

- Load BalancerEnsure that applications deployed across cloud environments are available, secure, and responsive with an easy, modern approach to load balancing

- Virtual Data CenterNo need to build and maintain a physical data center. It’s time for the virtual data center

- Private LinkPrivate Link is a service offered by Cyfuture Cloud that enables businesses to securely connect their on-premises network to Cyfuture Cloud's network over a private network connection

- Private CircuitGain a high level of security and privacy with private circuits

- VPN GatewaySecurely connect your on-premises network to our network over the internet with VPN Gateway

- CDNGet high availability and performance by distributing the service spatially relative to end users with CDN

-

Media

-

Analytics

Analytics

-

Security

Security

-

Network Firewall

- DNATTranslate destination IP address when connecting from public IP address to a private IP address with DNAT

- SNATWith SNAT, allow traffic from a private network to go to the internet

- WAFProtect your applications from any malicious activity with Cyfuture Cloud WAF service

- DDoSSave your organization from DoSS attacks with Cyfuture Cloud

- IPS/ IDSMonitor and prevent your cloud-based network & infrastructure with IPS/ IDS service by Cyfuture Cloud

- Anti-Virus & Anti-MalwareProtect your cloud-based network & infrastructure with antivirus and antimalware services by Cyfuture Cloud

- Threat EmulationTest the effectiveness of cloud security system with Cyfuture Cloud threat emulation service

- SIEM & SOARMonitor and respond to security threats with SIEM & SOAR services offered by Cyfuture Cloud

- Multi-Factor AuthenticationNow provide an additional layer of security to prevent unauthorized users from accessing your cloud account, even when the password has been stolen!

- SSLSecure data transmission over web browsers with SSL service offered by Cyfuture Cloud

- Threat Detection/ Zero DayThreat detection and zero-day protection are security features that are offered by Cyfuture Cloud as a part of its security offerings

- Vulnerability AssesmentIdentify and analyze vulnerabilities and weaknesses with the Vulnerability Assessment service offered by Cyfuture Cloud

- Penetration TestingIdentify and analyze vulnerabilities and weaknesses with the Penetration Testing service offered by Cyfuture Cloud

- Cloud Key ManagementSecure storage, management, and use of cryptographic keys within a cloud environment with Cloud Key Management

- Cloud Security Posture Management serviceWith Cyfuture Cloud, you get continuous cloud security improvements and adaptations to reduce the chances of successful attacks

- Managed HSMProtect sensitive data and meet regulatory requirements for secure data storage and processing.

- Zero TrustEnsure complete security of network connections and devices over the cloud with Zero Trust Service

- IdentityManage and control access to their network resources and applications for your business with Identity service by Cyfuture Cloud

-

-

Liquid Cooled Data Center

- Solutions

-

Solutions

Solutions

-

Cloud

Hosting

Cloud

Hosting

-

VPS

Hosting

VPS

Hosting

-

GPU Cloud

-

Dedicated

Server

Dedicated

Server

-

Server

Colocation

Server

Colocation

-

Backup as a Service

Backup as a Service

-

CDN

Network

CDN

Network

-

Window

Cloud Hosting

Window

Cloud Hosting

-

Linux

Cloud Hosting

Linux

Cloud Hosting

-

Managed Cloud Service

-

Storage as a Service

-

VMware

Public Cloud

VMware

Public Cloud

-

Multi-Cloud

Hosting

Multi-Cloud

Hosting

-

Cloud

Server Hosting

Cloud

Server Hosting

-

Bare

Metal Server

Bare

Metal Server

-

Virtual

Machine

Virtual

Machine

-

Magento

Hosting

Magento

Hosting

-

Remote Backup

-

DevOps

DevOps

-

Kubernetes

Kubernetes

-

Cloud

Storage

Cloud

Storage

-

NVMe Hosting

-

DR

as s Service

DR

as s Service

-

-

Solutions

- Marketplace

- Pricing

- Resources

- Resources

-

By Product

Use Cases

-

By Industry

- Company

-

Company

Company

-

Company

Why AI Teams Are Moving GPU Cloud Servers Into Private Colocation Cages

Table of Contents

- Why%20AI%20Teams%20Are%20Moving%20GPU%20Cloud%20Servers%20Into%20Private%20Colocation%20Cages<%2Fstrong>%20https%3A%2F%2Fcyfuture.cloud%2Fblog%2Fwhy-ai-teams-are-moving-gpu-cloud-servers-into-private-colocation-cages%2F" title="" onclick="essb.tracking_only('', 'whatsapp', '1524405333', true);" target="_self" rel="nofollow" >WhatsApp

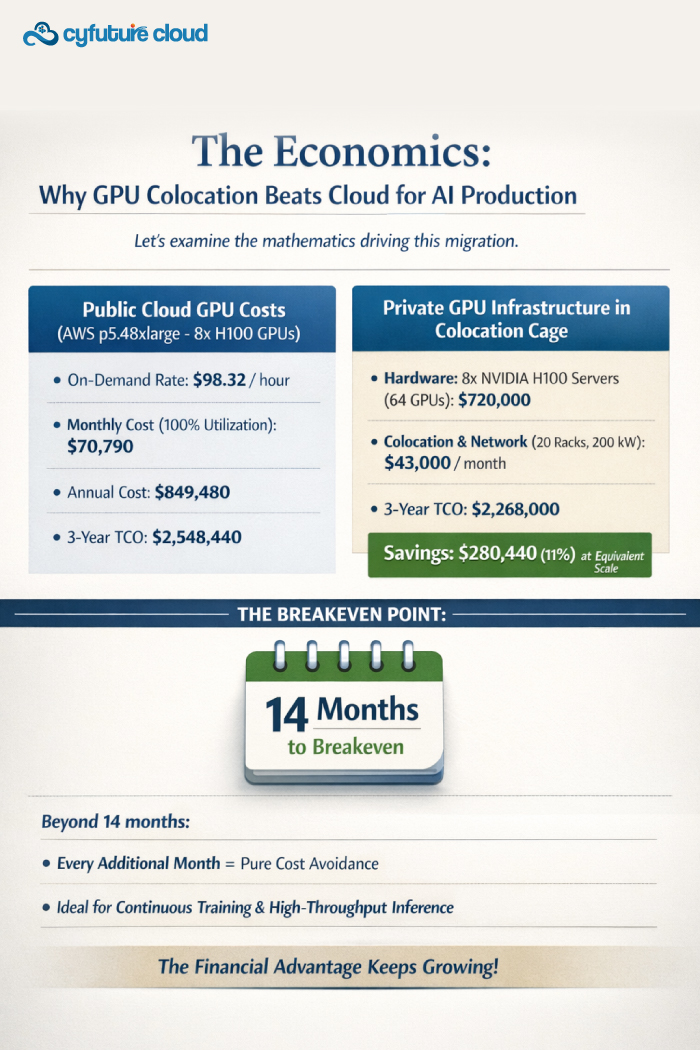

A 2024 MLOps Community survey revealed that 68% of AI teams running production workloads on cloud GPU instances experienced cost overruns exceeding 200% of initial budgets. The culprit? The intersection of insatiable GPU demand for training large language models (LLMs), computer vision systems, and generative AI applications with public cloud pricing models that weren’t designed for sustained, high-utilization compute workloads.

Here’s what’s happening:

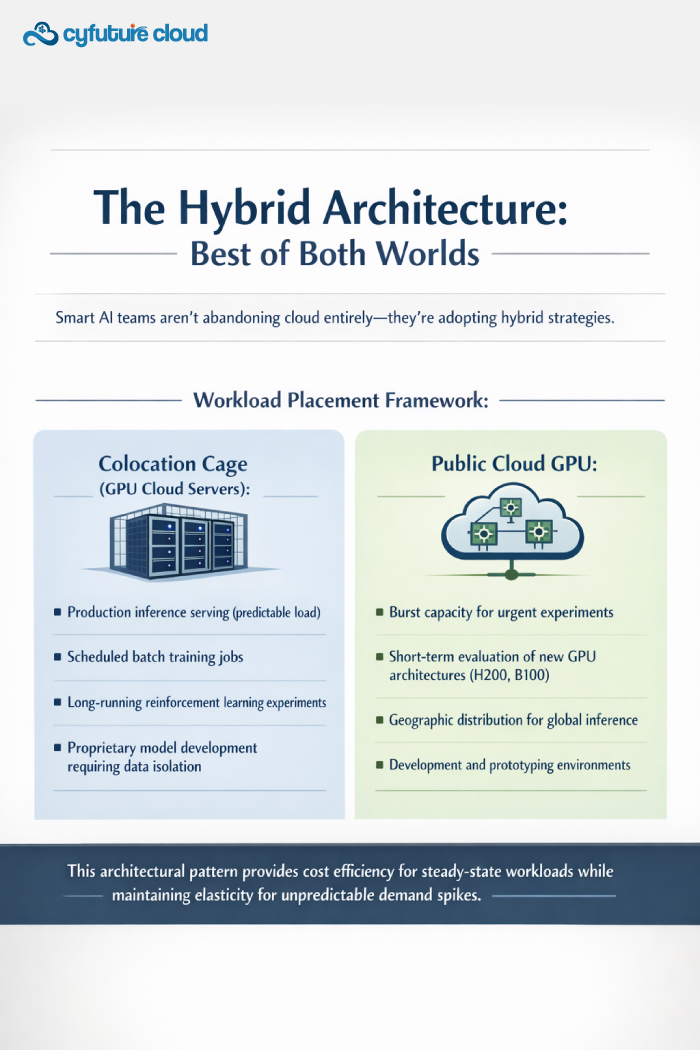

Leading AI organizations—from autonomous vehicle startups to healthcare AI labs—are executing a strategic infrastructure shift. They’re purchasing NVIDIA H100, A100, and L40S GPU cloud servers and deploying them in private colocation cages rather than renting them hourly from hyperscalers.

The result? 60-75% cost reductions over 36 months while gaining performance control that public cloud simply cannot deliver.

What Is a Colocation Cage and Why Does It Matter for GPU Infrastructure?

A colocation cage is a physically secured, private enclosure within a data center facility where organizations deploy their own hardware infrastructure. Unlike shared rack space, cages offer:

- Exclusive floor space: Typically 100-2,000 square feet for dense server deployments

- Dedicated power circuits: 50-500 kW with customizable redundancy (N+1 or 2N configurations)

- Physical isolation: Chain-link or metal panel barriers with individual access control

- Custom cooling: Ability to implement rear-door heat exchangers or liquid cooling for GPU densities

For AI workloads, this architecture solves a critical problem:

GPU cloud servers generate extreme heat density—an 8-GPU NVIDIA H100 server consumes 10.2 kW and produces 34,800 BTU/hour. Standard data center racks designed for 5-8 kW can’t accommodate modern AI infrastructure without specialized cooling, which colocation cages provide through customized environmental controls.

Technical Advantages: Performance Control Public Cloud Cannot Match

1. Network Topology Optimization

GPU cloud servers in a private colocation cage enable custom InfiniBand or RoCE (RDMA over Converged Ethernet) fabrics. This matters critically for distributed training:

- Cloud inter-instance bandwidth: 100-400 Gbps with variable latency (5-50 microseconds)

- Private InfiniBAN fabric: 400-800 Gbps with deterministic sub-2 microsecond latency

Training a GPT-3 scale model (175B parameters) across 1,024 GPUs:

- Cloud configuration: 28-35 days training time

- Optimized colocation: 18-22 days training time

Time savings translate directly to competitive advantage in AI research and product development.

2. Storage Performance and Data Gravity

AI training datasets increasingly exceed 100TB. Cloud storage costs become prohibitive:

- AWS S3 storage: $0.023/GB/month = $23,000/month for 100TB

- Data egress: $0.09/GB = $9,000 per full dataset transfer

A colocation cage enables:

- Direct-attached NVMe storage arrays delivering 20-40 GB/s read throughput

- Zero egress fees for data movement between storage and compute

- Persistent fast storage (no cold start penalties)

3. GPU Utilization Optimization

Public cloud GPU instances bill hourly regardless of utilization. If your training job uses 60% average GPU utilization due to data loading bottlenecks, you’re paying for 40% idle capacity.

In a private colocation cage with owned GPU cloud servers:

- Optimize workload scheduling across your entire GPU fleet

- Run lower-priority inference workloads on temporarily idle training GPUs

- Achieve 85-95% sustained utilization through multi-tenancy

Real-World Success: AI Infrastructure Migration Case Study

Computer Vision Startup – Autonomous Driving

Challenge: Training perception models on 500TB video dataset with 12-hour iteration cycles costing $180,000/month on AWS p4d instances.

Solution: Deployed 48 NVIDIA A100 GPU cloud servers in Cyfuture Cloud colocation cage (Sydney facility).

Results after 18 months:

- Cost reduction: 68% ($115,000 monthly savings)

- Training acceleration: 40% faster due to optimized storage architecture

- Iteration velocity: Daily model updates vs. 3x weekly previously

- ROI: 11-month payback period

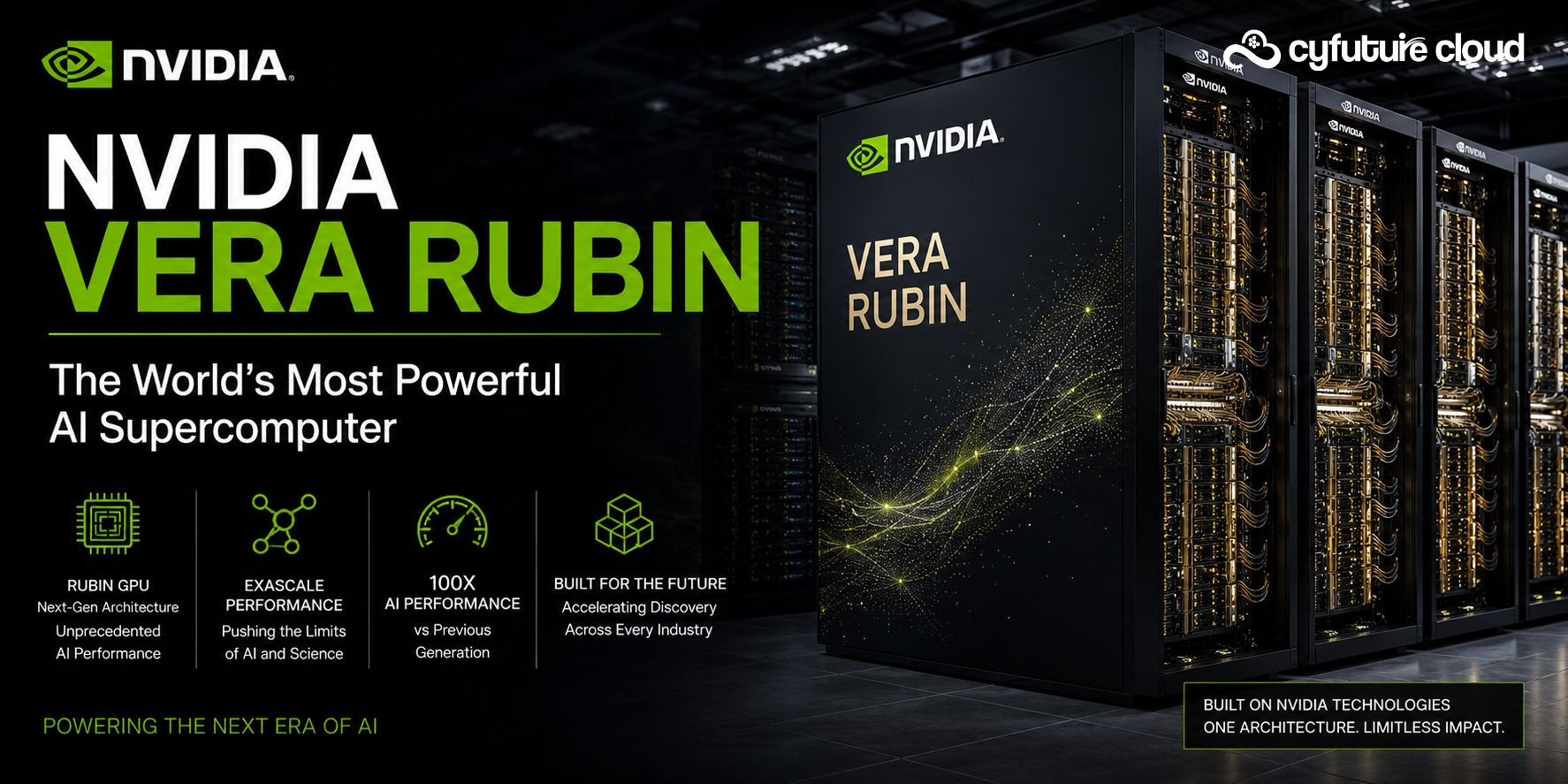

Future-Proofing: The AI Infrastructure Roadmap

The trajectory is clear:

NVIDIA’s 2024-2026 GPU roadmap (H200, B100, X100 architectures) continues increasing compute density and power requirements. By 2026, flagship AI accelerators will consume 1,000-1,500W per GPU (up from 700W for H100).

Colocation cages provide the infrastructure flexibility to evolve:

- Upgrade cooling systems as power density increases

- Swap GPU generations without changing facility contracts

- Scale storage and network independently from compute

- Adapt to emerging technologies (optical interconnects, quantum accelerators)

Public cloud GPU pricing historically remains static or increases as new generations launch—owning infrastructure in colocation cages protects against vendor pricing changes.

Architect Your Competitive AI Infrastructure Advantage

The economics and technical benefits are undeniable:

For AI teams with sustained GPU requirements, colocation cages housing privately-owned GPU cloud servers deliver superior cost efficiency, performance control, and strategic flexibility compared to renting cloud GPUs indefinitely.

Your decision framework:

If your AI workloads require GPUs for 12+ months at 50%+ average utilization, the financial case for colocation cages becomes compelling. If you need specialized network topologies, data sovereignty, or maximum performance for competitive advantage, the technical case is equally strong.

Start by calculating your current cloud GPU spend and utilization patterns. Model the capital expenditure for equivalent owned infrastructure in a colocation cage. Factor in your team’s operational capabilities—managing physical infrastructure requires skills distinct from cloud operations.

Cyfuture Cloud eliminates the operational complexity through managed colocation cage services that deliver the economics and performance of private GPU infrastructure without requiring you to become a data center expert.

Transform your AI infrastructure from a mounting cost center into a strategic competitive advantage—architect for performance, optimize for economics, and scale without compromise in purpose-built colocation cages designed specifically for the extreme demands of modern GPU workloads.

Recent Post

Stay Ahead of the Curve.

Join the Cloud Movement, today!

© Cyfuture, All rights reserved.

Send this to a friend

Pricing

Calculator

Pricing

Calculator

Power

Power

Utilities

Utilities VMware

Private Cloud

VMware

Private Cloud VMware

on AWS

VMware

on AWS VMware

on Azure

VMware

on Azure Service

Level Agreement

Service

Level Agreement