Get 69% Off on Cloud Hosting : Claim Your Offer Now!

- Products

-

Compute

Compute

- Predefined TemplatesChoose from a library of predefined templates to deploy virtual machines!

- Custom TemplatesUse Cyfuture Cloud custom templates to create new VMs in a cloud computing environment

- Spot Machines/ Machines on Flex ModelAffordable compute instances suitable for batch jobs and fault-tolerant workloads.

- Shielded ComputingProtect enterprise workloads from threats like remote attacks, privilege escalation, and malicious insiders with Shielded Computing

- GPU CloudGet access to graphics processing units (GPUs) through a Cyfuture cloud infrastructure

- vAppsHost applications and services, or create a test or development environment with Cyfuture Cloud vApps, powered by VMware

- Serverless ComputingNo need to worry about provisioning or managing servers, switch to Serverless Computing with Cyfuture Cloud

- HPCHigh-Performance Computing

- BaremetalBare metal refers to a type of cloud computing service that provides access to dedicated physical servers, rather than virtualized servers.

-

Storage

Storage

- Standard StorageGet access to low-latency access to data and a high level of reliability with Cyfuture Cloud standard storage service

- Nearline StorageStore data at a lower cost without compromising on the level of availability with Nearline

- Coldline StorageStore infrequently used data at low cost with Cyfuture Cloud coldline storage

- Archival StorageStore data in a long-term, durable manner with Cyfuture Cloud archival storage service

-

Database

Database

- MS SQLStore and manage a wide range of applications with Cyfuture Cloud MS SQL

- MariaDBStore and manage data with the cloud with enhanced speed and reliability

- MongoDBNow, store and manage large amounts of data in the cloud with Cyfuture Cloud MongoDB

- Redis CacheStore and retrieve large amounts of data quickly with Cyfuture Cloud Redis Cache

-

Automation

Automation

-

Containers

Containers

- KubernetesNow deploy and manage your applications more efficiently and effectively with the Cyfuture Cloud Kubernetes service

- MicroservicesDesign a cloud application that is multilingual, easily scalable, easy to maintain and deploy, highly available, and minimizes failures using Cyfuture Cloud microservices

-

Operations

Operations

- Real-time Monitoring & Logging ServicesMonitor & track the performance of your applications with real-time monitoring & logging services offered by Cyfuture Cloud

- Infra-maintenance & OptimizationEnsure that your organization is functioning properly with Cyfuture Cloud

- Application Performance ServiceOptimize the performance of your applications over cloud with us

- Database Performance ServiceOptimize the performance of databases over the cloud with us

- Security Managed ServiceProtect your systems and data from security threats with us!

- Back-up As a ServiceStore and manage backups of data in the cloud with Cyfuture Cloud Backup as a Service

- Data Back-up & RestoreStore and manage backups of your data in the cloud with us

- Remote Back-upStore and manage backups in the cloud with remote backup service with Cyfuture Cloud

- Disaster RecoveryStore copies of your data and applications in the cloud and use them to recover in the event of a disaster with the disaster recovery service offered by us

-

Networking

Networking

- Load BalancerEnsure that applications deployed across cloud environments are available, secure, and responsive with an easy, modern approach to load balancing

- Virtual Data CenterNo need to build and maintain a physical data center. It’s time for the virtual data center

- Private LinkPrivate Link is a service offered by Cyfuture Cloud that enables businesses to securely connect their on-premises network to Cyfuture Cloud's network over a private network connection

- Private CircuitGain a high level of security and privacy with private circuits

- VPN GatewaySecurely connect your on-premises network to our network over the internet with VPN Gateway

- CDNGet high availability and performance by distributing the service spatially relative to end users with CDN

-

Media

-

Analytics

Analytics

-

Security

Security

-

Network Firewall

- DNATTranslate destination IP address when connecting from public IP address to a private IP address with DNAT

- SNATWith SNAT, allow traffic from a private network to go to the internet

- WAFProtect your applications from any malicious activity with Cyfuture Cloud WAF service

- DDoSSave your organization from DoSS attacks with Cyfuture Cloud

- IPS/ IDSMonitor and prevent your cloud-based network & infrastructure with IPS/ IDS service by Cyfuture Cloud

- Anti-Virus & Anti-MalwareProtect your cloud-based network & infrastructure with antivirus and antimalware services by Cyfuture Cloud

- Threat EmulationTest the effectiveness of cloud security system with Cyfuture Cloud threat emulation service

- SIEM & SOARMonitor and respond to security threats with SIEM & SOAR services offered by Cyfuture Cloud

- Multi-Factor AuthenticationNow provide an additional layer of security to prevent unauthorized users from accessing your cloud account, even when the password has been stolen!

- SSLSecure data transmission over web browsers with SSL service offered by Cyfuture Cloud

- Threat Detection/ Zero DayThreat detection and zero-day protection are security features that are offered by Cyfuture Cloud as a part of its security offerings

- Vulnerability AssesmentIdentify and analyze vulnerabilities and weaknesses with the Vulnerability Assessment service offered by Cyfuture Cloud

- Penetration TestingIdentify and analyze vulnerabilities and weaknesses with the Penetration Testing service offered by Cyfuture Cloud

- Cloud Key ManagementSecure storage, management, and use of cryptographic keys within a cloud environment with Cloud Key Management

- Cloud Security Posture Management serviceWith Cyfuture Cloud, you get continuous cloud security improvements and adaptations to reduce the chances of successful attacks

- Managed HSMProtect sensitive data and meet regulatory requirements for secure data storage and processing.

- Zero TrustEnsure complete security of network connections and devices over the cloud with Zero Trust Service

- IdentityManage and control access to their network resources and applications for your business with Identity service by Cyfuture Cloud

-

-

Compute

- Solutions

-

Solutions

Solutions

-

Cloud

Hosting

Cloud

Hosting

-

VPS

Hosting

VPS

Hosting

-

GPU Cloud

-

Dedicated

Server

Dedicated

Server

-

Server

Colocation

Server

Colocation

-

Backup as a Service

Backup as a Service

-

CDN

Network

CDN

Network

-

Window

Cloud Hosting

Window

Cloud Hosting

-

Linux

Cloud Hosting

Linux

Cloud Hosting

-

Managed Cloud Service

-

Storage as a Service

-

VMware

Public Cloud

VMware

Public Cloud

-

Multi-Cloud

Hosting

Multi-Cloud

Hosting

-

Cloud

Server Hosting

Cloud

Server Hosting

-

Bare

Metal Server

Bare

Metal Server

-

Virtual

Machine

Virtual

Machine

-

Magento

Hosting

Magento

Hosting

-

Remote Backup

-

DevOps

DevOps

-

Kubernetes

Kubernetes

-

Cloud

Storage

Cloud

Storage

-

NVMe Hosting

-

DR

as s Service

DR

as s Service

-

-

Solutions

- Marketplace

- Pricing

- Resources

- Resources

-

By Product

Use Cases

-

By Industry

- Company

-

Company

Company

-

Company

H100 GPU as a Service in 2026: The Fastest Path to Enterprise AI at Scale

Table of Contents

- Why the H100 GPU Dominates Enterprise AI in 2026

- GPU as a Service: The Smart Way to Access H100 Power

- Real-World Performance: H100 at Scale

- H100 vs. H200: Should You Wait for the Next Gen?

- Use Cases Accelerated by H100 GPU as a Service

- 1. Generative AI & LLM Training

- 2. Real-Time Inference at Scale

- 3. High-Performance Computing (HPC)

- 4. Computer Vision & Autonomous Systems

- Why Cyfuture Cloud for H100 GPU as a Service?

- The Bottom Line

In 2026, AI isn’t just transforming industries—it’s redefining them. From hyper-realistic generative models to autonomous decision-making systems, enterprise AI is moving at breakneck speed. But there’s a bottleneck: compute. The NVIDIA H100 GPU has emerged as the gold standard for powering this revolution, and GPU as a Service is the fastest, most scalable way for enterprises to access it without the astronomical capex of on-premise hardware.

If you’re a tech leader, developer, or enterprise strategist aiming to deploy AI at scale in 2026, the H100 isn’t optional—it’s essential.

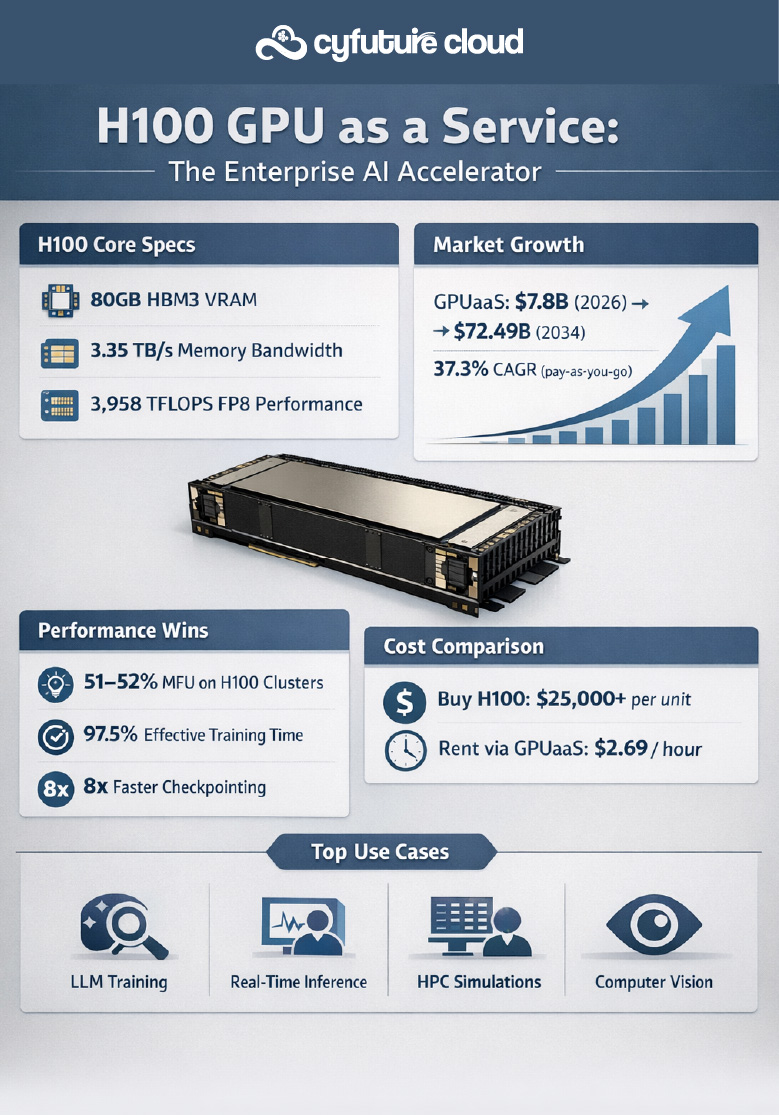

Why the H100 GPU Dominates Enterprise AI in 2026

The H100 Tensor Core GPU, built on NVIDIA’s Hopper architecture, is the backbone of modern AI infrastructure. As of Q3 fiscal 2026, NVIDIA’s data center revenue hit $51.2 billion, up 66% year-over-year, driven largely by H100 demand.

Key H100 Specifications That Make It Irreplaceable

|

Specification |

H100 SXM |

H100 PCIe |

|

Architecture |

NVIDIA Hopper |

NVIDIA Hopper |

|

GPU Memory |

80GB HBM3 |

80GB HBM3 |

|

Memory Bandwidth |

3.35 TB/s |

2.0 TB/s |

|

TDP (Power) |

700W |

350W |

|

FP8 Performance |

3,958 TFLOPS |

2,000 TFLOPS |

|

FP16 Performance |

1,979 TFLOPS |

1,000 TFLOPS |

|

FP32 Performance |

989 TFLOPS |

500 TFLOPS |

The H100’s 4th-generation Tensor Cores with FP8 Transformer Engine deliver up to 3,958 TFLOPS in FP8 throughput—making it 6–9x faster than the previous A100 for transformer workloads. This is why 90%+ of large language model (LLM) training in 2026 runs on H100 clusters.

GPU as a Service: The Smart Way to Access H100 Power

Buying an H100 outright costs $25,000+ per unit, and building a cluster demands massive capital, cooling, and maintenance. Enter GPU as a Service (GPUaaS): on-demand cloud access to H100 GPUs with pay-as-you-go pricing starting at just $2.69/hour.

GPUaaS Market Growth in 2026

|

Metric |

Value |

|

Global GPUaaS Market (2025) |

$5.79 billion |

|

GPUaaS Market (2026) |

$7.80 billion |

|

Projected GPUaaS Market (2034) |

$72.49 billion |

|

CAGR (2026–2034) |

37.3% (pay-as-you-go segment) |

|

Large Enterprises Adoption (2026) |

61.30% market share |

Large enterprises dominate GPUaaS adoption because managing GPU hardware is resource-intensive. Cloud GPU eliminates the burden of upgrades, maintenance, and power costs while offering flexible scaling for intermittent workloads.

Real-World Performance: H100 at Scale

CoreWeave’s large-scale testing of H100 clusters revealed groundbreaking results:

- 51–52% Model FLOPS Utilization (MFU) vs. industry-typical 35–45%

- 3.66 days Mean Time To Failure (MTTF) at 1,024 GPUs (10x improvement)

- 97.5% Effective Training Time Ratio (ETTR)

- 8x faster checkpointing using async Tensorizer

These benchmarks prove H100 clusters can deliver production-grade reliability for enterprise AI—critical when training models like Llama 3 or deploying real-time inference at millions of requests per second.

H100 vs. H200: Should You Wait for the Next Gen?

NVIDIA’s H200, released in late 2024, offers 141GB HBM3e memory (vs. H100’s 80GB) and 42% faster LLM inference. However, the H100 remains the workhorse of 2026 AI infrastructure:

- Ecosystem maturity: Better library support, tooling, and enterprise integrations

- Cost efficiency: H100 pricing is 30–40% lower than H200

- Mass availability: H200 supply is still constrained; H100 is widely available via GPUaaS

For most enterprises, the H100 delivers the best performance-per-dollar in 2026.

Use Cases Accelerated by H100 GPU as a Service

1. Generative AI & LLM Training

Training a 70B-parameter model on H100 takes ~3.66 days on a 1,024-GPU cluster vs. weeks on older GPUs.

2. Real-Time Inference at Scale

H100’s 3.35 TB/s memory bandwidth enables sub-millisecond inference for chatbots, recommendation engines, and computer vision systems.

3. High-Performance Computing (HPC)

From molecular simulation to climate modeling, H100 accelerates HPC workloads by 5–10x compared to CPU-only systems.

4. Computer Vision & Autonomous Systems

H100 processes thousands of video streams simultaneously for fraud detection, medical imaging, and autonomous vehicles.

Why Cyfuture Cloud for H100 GPU as a Service?

At Cyfuture Cloud, we don’t just provide H100 GPUs—we provide a complete AI infrastructure ecosystem:

- H100 80GB PCIe servers with transparent, flexible pricing

- Serverless inferencing for auto-scaling AI workloads

- Multi-tenant MIG (Multi-Instance GPU) for secure, efficient resource sharing

- NVIDIA GPU Cloud integration for pre-optimized AI containers

- Low-latency, enterprise-grade infrastructure tuned for AI/ML workloads

Whether you’re training foundation models, deploying real-time AI, or running complex simulations, Cyfuture ensures scalable, cost-efficient computing tailored to your needs.

The Bottom Line

In 2026, the H100 GPU is the fastest path to enterprise AI at scale—and GPU as a Service is the smartest way to access it. With $500+ billion in global AI spending projected this year, the question isn’t whether to adopt H100-powered AI—it’s how quickly you can deploy it.

Cyfuture Cloud gives you instant access to H100 power with enterprise-grade reliability, flexible pricing, and zero infrastructure overhead. Don’t let compute become your bottleneck.

Recent Post

Stay Ahead of the Curve.

Join the Cloud Movement, today!

© Cyfuture, All rights reserved.

Send this to a friend

Pricing

Calculator

Pricing

Calculator

Power

Power

Utilities

Utilities VMware

Private Cloud

VMware

Private Cloud VMware

on AWS

VMware

on AWS VMware

on Azure

VMware

on Azure Service

Level Agreement

Service

Level Agreement