Table of Contents

- Understanding Cloud GPU Pricing in 2026

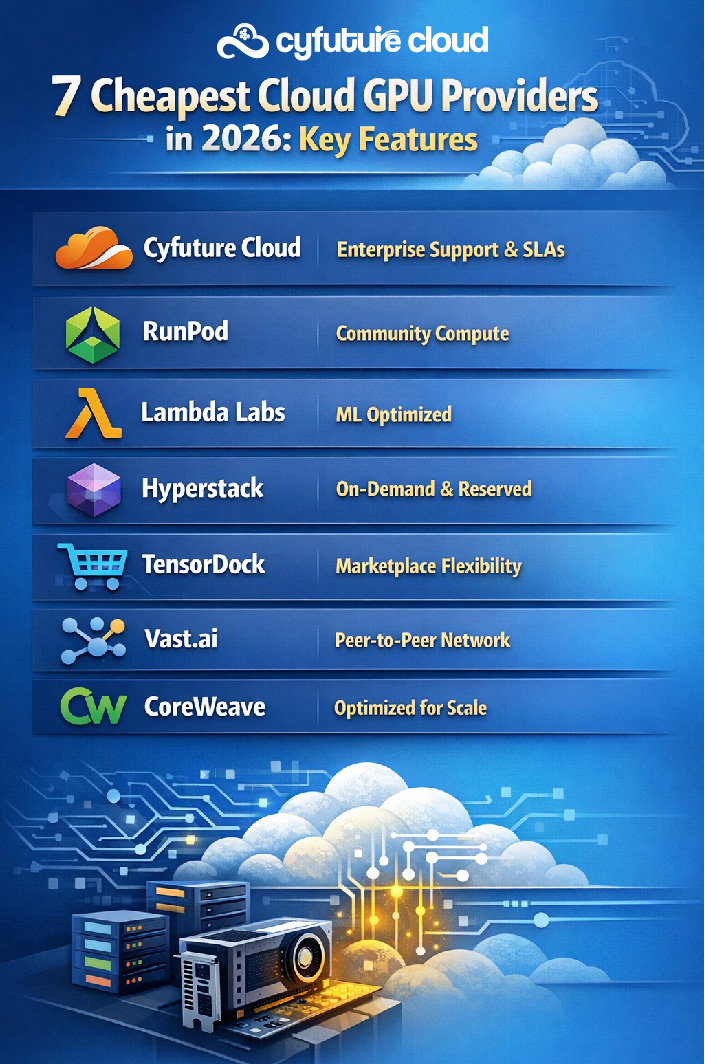

- The 7 Cheapest Cloud GPU Providers in 2026

- Key Considerations When Choosing a GPU Provider

- 2026 Market Trends and Emerging Players

- Maximizing Your Cloud GPU Investment

- Why Cyfuture Cloud Leads in Value and Performance

- Conclusion

- Frequently Asked Questions (FAQs)

- Q1: How does Cyfuture Cloud’s GPU pricing compare to the providers listed?

- Q2: What’s the difference between spot instances and on-demand instances, and which should I choose?

- Q3: Are there hidden costs I should be aware of when using cloud GPU providers?

- Q4: What GPU should I choose for my AI/ML workload—H100, A100, or L40S?

- Q5: How can I reduce my cloud GPU costs without sacrificing performance?

The demand for GPU computing has skyrocketed in 2026, driven by advances in artificial intelligence, machine learning, 3D rendering, and high-performance computing (HPC). However, accessing powerful GPU resources doesn’t have to break the bank. With the proliferation of specialized cloud GPU providers leveraging NVIDIA’s latest hardware—including the A100, H100, H200, and L40S—businesses and researchers can now access enterprise-grade computing power at a fraction of traditional costs.

Whether you’re training large language models, running complex simulations, or rendering CGI, choosing the right cloud GPU provider can significantly impact your bottom line. In this comprehensive guide, we’ll explore the seven cheapest cloud GPU providers in 2026 and help you make an informed decision for your computational needs.

Understanding Cloud GPU Pricing in 2026

Before diving into specific providers, it’s essential to understand what makes GPU cloud computing affordable in 2026. The market has evolved from the monopoly of major public clouds to a diverse ecosystem of specialized providers offering pay-as-you-go and spot instance pricing models. Spot instances, in particular, can reduce costs by 20–80% compared to on-demand pricing by utilizing unused compute capacity.

The pricing landscape is dominated by NVIDIA hardware, with H100 GPU and H200 GPUs becoming the standard for AI training workloads, while older A100 GPU and L40S models offer excellent value for inference tasks. However, smart buyers look beyond the hourly rate—hidden costs like data egress fees ($0.08–$0.12/GB) and storage costs ($0.10–$0.30/GB) can significantly inflate your total expenditure.

The 7 Cheapest Cloud GPU Providers in 2026

1. Cyfuture Cloud

Cyfuture Cloud stands out as a comprehensive solution that combines competitive pricing with enterprise-grade infrastructure and dedicated support. Unlike bare-bones GPU rental services, Cyfuture Cloud offers end-to-end cloud solutions with robust security, compliance certifications, and customizable configurations. Our platform supports the full range of NVIDIA hardware including A100, H100, H200 GPU, and L40S GPUs, with flexible pricing models that include on-demand, reserved, and spot instances. What sets Cyfuture Cloud apart is our commitment to transparent pricing, predictable costs, and expert consultation to optimize your GPU spend. Whether you’re a startup experimenting with AI or an enterprise scaling production workloads, our team works closely with you to architect cost-effective solutions that don’t compromise on performance or reliability.

2. RunPod

RunPod has emerged as one of the most cost-effective options for AI practitioners in 2026. Their community-driven compute model enables A100 instances starting at approximately $1.19/hr, making it accessible for startups and individual researchers. RunPod supports the full range of NVIDIA’s latest hardware, including A100, H100, and H200 instances, with an intuitive interface that eliminates complexity. Their serverless GPU offering is particularly attractive for intermittent workloads, ensuring you only pay for actual compute time.

3. Lambda Labs

For those requiring cutting-edge performance, Lambda Labs consistently delivers some of the lowest on-demand prices for H100 and H200 GPUs. With H100 PCIe instances starting around $2.49/hr, Lambda Labs has become the go-to choice for large-scale training workloads. Their infrastructure is purpose-built for machine learning, offering pre-configured environments with popular frameworks like PyTorch and TensorFlow, reducing setup time and accelerating time-to-insight.

4. Hyperstack

Specializing exclusively in GPU cloud services, Hyperstack offers H100 SXM and L40 configurations optimized for high-performance computing. Their focus on cost-effective solutions with both on-demand and reserved pricing options provides flexibility for various budget constraints. Hyperstack’s infrastructure is designed for maximum utilization, ensuring you get the best performance per dollar spent.

5. TensorDock

TensorDock’s marketplace-style approach to GPU provisioning sets it apart from traditional providers. By offering highly customizable configurations, users can fine-tune their instances to match exact requirements, eliminating waste. Their A100 80GB instances are available at competitive rates, and the platform’s flexibility makes it ideal for experimental workloads where requirements may change frequently.

6. Vast.ai

Leveraging a revolutionary peer-to-peer network, Vast.ai frequently offers the absolute lowest prices in the market by tapping into unused, distributed compute power globally. This decentralized approach can yield savings of up to 80% compared to traditional providers. While the community-driven model may involve some variability in availability, the cost savings are unmatched for price-sensitive projects.

7. CoreWeave

CoreWeave’s Kubernetes-native infrastructure provides enterprise-grade scalability with cost-effective pricing. Specialized in GPU-accelerated workloads, CoreWeave excels at scaling large language models and distributed training jobs. Their optimized architecture ensures minimal overhead, translating to better performance per dollar and making them a favorite among AI companies scaling rapidly.

Key Considerations When Choosing a GPU Provider

GPU Selection Strategy: While H100 and H200 GPUs represent the pinnacle of performance, they’re not always necessary. For inference workloads, older A100s or L40S models offer excellent value at significantly lower price points. Assess your actual computational requirements before defaulting to the latest hardware.

Spot vs. On-Demand Instances: Spot instances can slash costs by over 50%, but they come with the risk of interruption. For fault-tolerant workloads like batch processing or model experimentation, spot instances are ideal. Critical production workloads benefit from the stability of on-demand or reserved instances.

Hidden Cost Management: The advertised hourly rate is just the beginning. Data egress fees accumulate quickly when moving large datasets or model weights. Storage costs also add up, especially for projects requiring persistent volumes. Calculate your total cost of ownership (TCO) by factoring in all ancillary expenses.

2026 Market Trends and Emerging Players

The GPU cloud market continues to democratize access to computational resources. Emerging players like SiliconFlow and Fluence are making waves with specialized offerings for AI inference and training, often at prices that undercut established providers. These newcomers leverage innovative architectures and business models to deliver exceptional value.

The shift toward sustainable computing is also influencing provider selection, with companies like Genesis Cloud leading the charge in carbon-neutral GPU hosting. As environmental concerns grow, expect more providers to adopt green energy sources without passing premium costs to customers.

Maximizing Your Cloud GPU Investment

To optimize your GPU spending, consider these strategies:

- Right-size your instances: Don’t overprovision. Match GPU capabilities to workload requirements.

- Implement auto-scaling: Automatically adjust resources based on demand to avoid paying for idle capacity.

- Use monitoring tools: Track GPU utilization to identify optimization opportunities.

- Experiment with smaller GPUs: For development and testing, lower-tier GPUs often suffice.

- Leverage free tiers and credits: Many providers offer initial credits for new customers—take advantage of these for evaluation.

Why Cyfuture Cloud Leads in Value and Performance

While specialized GPU providers offer attractive hourly rates, Cyfuture Cloud combines competitive pricing with enterprise-grade support and customization options that many bare-bones providers cannot match. Our infrastructure is designed for businesses requiring not just affordable GPUs, but comprehensive cloud solutions with robust security, compliance certifications, and dedicated technical support.

Cyfuture Cloud offers flexible pricing models, multi-GPU configurations, and seamless integration with existing cloud infrastructure. Whether you’re running AI workloads, rendering pipelines, or HPC simulations, our team works with you to optimize both performance and cost. Unlike marketplace-style providers where you’re on your own, Cyfuture Cloud provides:

- 24/7 Technical Support: Expert assistance when you need it most

- Custom Configurations: Tailored solutions for your specific workload

- Enterprise SLAs: Guaranteed uptime and performance commitments

- Transparent Pricing: No hidden fees or surprise charges

- Compliance Ready: SOC 2, ISO certifications, and industry-specific compliance

- Hybrid Cloud Integration: Seamless connectivity with your existing infrastructure

Conclusion

The landscape of cloud GPU providers in 2026 is more competitive and accessible than ever. From Cyfuture Cloud’s enterprise-grade comprehensive solutions to RunPod’s community-driven affordability, Lambda Labs’ high-performance infrastructure, and Vast.ai’s peer-to-peer innovation, options abound for every budget and use case.

The key to maximizing value lies in understanding your specific requirements, carefully evaluating total costs beyond hourly rates, and choosing a provider that aligns with your technical needs and business objectives. Don’t hesitate to test multiple providers—most offer trial periods or pay-as-you-go options that minimize risk.

For businesses seeking the optimal balance of cost, performance, reliability, and support, Cyfuture Cloud stands as the premier choice in 2026’s competitive GPU cloud market.

Frequently Asked Questions (FAQs)

Q1: How does Cyfuture Cloud’s GPU pricing compare to the providers listed?

A: Cyfuture Cloud offers competitive pricing comparable to specialized GPU providers, with the added advantage of enterprise-grade support, compliance certifications, and comprehensive cloud infrastructure. While providers like RunPod and Vast.ai excel in bare-bones affordability, Cyfuture Cloud delivers superior value for businesses requiring integrated solutions, dedicated account management, and guaranteed SLAs. We also offer customized pricing for long-term commitments and volume users, often matching or beating spot instance rates of specialized providers. Most importantly, our transparent pricing eliminates hidden costs—what you see is what you pay, with expert guidance to optimize your spending.

Q2: What’s the difference between spot instances and on-demand instances, and which should I choose?

A: Spot instances utilize unused compute capacity at significantly reduced rates (20–80% cheaper) but can be interrupted with short notice when demand increases. On-demand instances provide guaranteed availability at standard pricing. For development, testing, batch processing, and fault-tolerant workloads, spot instances offer excellent value. For production systems, real-time applications, and critical deadlines, on-demand or reserved instances provide reliability. Cyfuture Cloud supports both models and can help you architect hybrid approaches that balance cost and reliability. Our technical team analyzes your workload patterns and recommends the optimal mix to maximize savings without compromising performance.

A: Yes, several costs beyond hourly GPU rates can impact your total expenditure. Data egress fees ($0.08–$0.12/GB) apply when transferring data out of the cloud, which accumulates quickly with large datasets. Storage costs ($0.10–$0.30/GB/month) for persistent volumes add up over time. Network bandwidth, snapshot storage, and premium support tiers also carry additional charges. At Cyfuture Cloud, we provide transparent pricing calculators and work with you to forecast total costs accurately, eliminating surprise charges. During onboarding, our cost optimization specialists review your architecture to identify potential cost drivers and recommend strategies to minimize expenses while maintaining performance.

Q4: What GPU should I choose for my AI/ML workload—H100, A100, or L40S?

A: The choice depends on your specific use case. H100 and H200 GPUs are ideal for large-scale model training, offering superior performance for transformer architectures and large language models. A100s remain excellent for both training and inference, providing a strong balance of performance and cost-effectiveness. L40S GPUs excel at inference workloads, offering great value when raw training speed isn’t critical. For most inference applications and smaller models, A100 or L40S GPUs deliver optimal price-performance. Cyfuture Cloud’s technical team can assess your workload requirements and recommend the most cost-effective GPU configuration. We also offer benchmarking services to test your actual code on different GPU types, ensuring you make data-driven decisions rather than guesses.

Q5: How can I reduce my cloud GPU costs without sacrificing performance?

A: Several strategies can optimize your GPU spending: (1) Right-size instances to match actual requirements—don’t overprovision. (2) Use spot instances for non-critical workloads to achieve 50–80% savings. (3) Implement auto-scaling to eliminate paying for idle resources. (4) Optimize your code and models for GPU efficiency—better utilization means less time required. (5) Use cheaper GPUs for development/testing, reserving premium hardware for production. (6) Batch similar workloads to maximize GPU utilization. (7) Consider reserved instances for predictable, long-term workloads. Cyfuture Cloud offers complimentary cost optimization consultations to help you implement these strategies effectively. Our monitoring tools provide real-time visibility into GPU utilization, helping you identify waste and opportunities for savings. Additionally, our experts can review your model architecture and suggest optimizations that reduce compute requirements without impacting accuracy.

Recent Post

Send this to a friend

Server

Colocation

Server

Colocation CDN

Network

CDN

Network Linux

Cloud Hosting

Linux

Cloud Hosting Kubernetes

Kubernetes Pricing

Calculator

Pricing

Calculator

Power

Power

Utilities

Utilities VMware

Private Cloud

VMware

Private Cloud VMware

on AWS

VMware

on AWS VMware

on Azure

VMware

on Azure Service

Level Agreement

Service

Level Agreement