Table of Contents

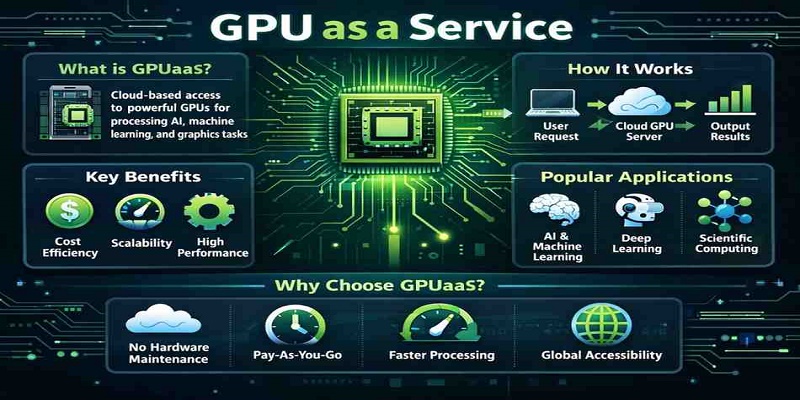

The computational landscape has shifted dramatically. In 2023, enterprises spent over $61 billion on GPU hardware, yet paradoxically, most of this computing power sits idle for 60-80% of the time. This inefficiency has catalyzed a fundamental transformation in how organizations approach high-performance computing infrastructure. The global GPU as a service market size was valued at $3.23 billion in 2023 & is projected to grow from $4.31 billion in 2024 to $49.84 billion by 2032, representing a staggering compound annual growth rate that reflects the strategic pivot from ownership to access-based computing models.

For CTOs and technical leaders navigating this landscape, the question isn’t whether to adopt GPU rental strategies, but how to optimize them for maximum ROI while maintaining operational excellence. This analysis examines the technical and economic factors driving the GPU rental revolution, with particular focus on the NVIDIA A100 ecosystem that has become the gold standard for enterprise AI workloads.

Market Dynamics and Economic Drivers

The GPU rental market explosion stems from three converging forces: capital efficiency requirements, workload variability, and technological advancement velocity. The global market for GPU Server Rental Service was valued at US$ 12062 million in the year 2024 and is projected to reach a revised size of US$ 34376 million by 2031, growing at a CAGR of 16.5% during the forecast period.

Capital Allocation Optimization

Traditional GPU procurement creates significant capital allocation challenges. A single NVIDIA A100 80GB costs approximately $15,000-$20,000 in hardware alone, before factoring in infrastructure, cooling, power, and maintenance costs. For enterprises requiring multi-GPU clusters for machine learning training or inference, initial investments can exceed $500,000 for relatively modest deployments.

The rental model transforms this fixed capital expenditure into operational expenses that scale directly with usage. Organizations can deploy GPU resources for specific projects, training cycles, or inference demands without the burden of depreciation, maintenance, or technological obsolescence. This shift is particularly crucial given that GPU technology cycles have accelerated, with new architectures emerging every 18-24 months.

Workload Elasticity and Demand Patterns

Enterprise AI workloads exhibit highly variable compute requirements. Machine learning model training may require intensive GPU clusters for days or weeks, followed by months of minimal usage. Research and development projects often need burst capacity for experimentation and hyperparameter optimization. Traditional owned infrastructure cannot efficiently accommodate these demand patterns without significant overprovisioning.

GPU rental services provide elasticity that matches workload demands precisely. Teams can scale from single GPU instances for development to multi-node clusters for production training, then scale back to inference-optimized configurations. This elasticity extends beyond simple scaling to include GPU architecture optimization, allowing teams to select the most appropriate hardware for specific workloads.

NVIDIA A100: The Enterprise Standard

The NVIDIA A100 Tensor Core GPU has established itself as the benchmark for enterprise AI and high-performance computing applications. Built on the Ampere architecture, the A100 delivers significant performance improvements over previous generations while introducing architectural innovations that enhance both training and inference workloads.

Technical Specifications and Performance Characteristics

The A100 architecture incorporates several key innovations that directly impact enterprise workloads:

Tensor Core Performance: The third-generation Tensor Cores provide up to 312 TFLOPS of AI performance for mixed-precision training, with support for multiple numerical formats including FP16, BF16, TF32, and INT8. This flexibility allows developers to optimize models for different stages of the AI pipeline, from training to inference deployment.

Memory Architecture: Available in both 40GB and 80GB HBM2e configurations, the A100 provides substantial memory bandwidth of 1.6TB/s. The larger memory capacity enables training of increasingly large language models and supports batch processing of complex workloads that were previously memory-constrained.

Multi-Instance GPU (MIG) Technology: Perhaps most relevant for rental scenarios, MIG allows a single A100 to be partitioned into up to seven independent GPU instances. This technology enables fine-grained resource allocation and improved utilization rates, particularly beneficial in multi-tenant cloud environments.

NVLink and NVSwitch Connectivity: The A100 supports high-speed GPU-to-GPU communication through NVLink 3.0, providing 600GB/s of bidirectional bandwidth. This connectivity is crucial for distributed training workloads and multi-GPU inference deployments.

Performance Benchmarks and Workload Optimization

Real-world performance data demonstrates the A100’s capabilities across diverse enterprise workloads:

Large Language Model Training: The A100 delivers 4x performance improvement over the V100 for large transformer models, reducing training time for BERT-Large from 3.5 days to approximately 20 hours using optimized configurations.

Computer Vision Workloads: ResNet-50 training performance shows 2.5x improvement over V100, with particularly strong gains in mixed-precision scenarios that leverage the enhanced Tensor Core architecture.

High-Performance Computing: Traditional HPC applications benefit from the A100’s double-precision performance, with molecular dynamics simulations showing up to 2x performance gains over previous generation hardware.

Inference Optimization: The A100’s support for multiple numerical precisions enables significant inference acceleration, with up to 5x throughput improvements for optimized models using TensorRT and other NVIDIA optimization frameworks.

GPU Rental Pricing Analysis

Understanding GPU rental pricing requires analysis of multiple variables including provider infrastructure, service level agreements, geographic availability, and additional services. Current market pricing for NVIDIA A100 instances varies significantly across providers and configurations.

Pricing Structure Components

Base Instance Pricing: Hyperstack’s Nvidia A100 GPU 80GB Cloud GPU is available for just $1.35/hour. However, pricing ranges from $1.35 to $4.50 per hour for A100 80GB instances, depending on provider, location, and service level. Datacrunch, Vultr, and Runpod: Strike a commendable balance between cost-effectiveness and availability. Their hourly rates range from $2.20 and $2.60.

Pricing Variables and Considerations:

- Instance Type: A100 40GB instances typically cost 20-30% less than 80GB variants

- Commitment Levels: Reserved instances can reduce costs by 30-50% compared to on-demand pricing

- Geographic Location: US East Coast and European regions often command premium pricing due to demand concentration

- Network and Storage: Additional costs for high-performance networking, premium storage, and data transfer can add 15-25% to base instance costs

- Support and SLA: Enterprise-grade support and uptime guarantees typically add 10-20% to base pricing

Total Cost of Ownership Analysis

For enterprise decision-making, comparing rental costs to ownership requires comprehensive TCO analysis including:

Purchase Scenario TCO:

- Hardware: $18,000 (A100 80GB)

- Server Infrastructure: $8,000-12,000

- Networking: $5,000-8,000

- Power and Cooling (3 years): $4,000-6,000

- Maintenance and Support (3 years): $3,000-5,000

- Total 3-Year TCO: $38,000-49,000

Rental Scenario Analysis:

- At $2.50/hour average pricing: $21,900 annually for full utilization

- Typical enterprise utilization (30-40%): $6,500-8,800 annually

- 3-Year Cost at 35% utilization: $22,750

The analysis reveals that rental models provide significant cost advantages for utilization rates below 50-60%, which represents the majority of enterprise workloads.

Provider Ecosystem and Service Differentiation

The GPU rental market has evolved beyond simple infrastructure provision to include specialized services and optimizations:

Hyperscale Cloud Providers: AWS, Azure, and Google Cloud provide comprehensive GPU services with extensive integration into their broader cloud ecosystems. Pricing is typically premium but includes enterprise-grade reliability, compliance, and integrated services.

Specialized GPU Cloud Providers: Companies like CoreWeave, Paperspace, and Lambda Labs focus specifically on GPU workloads, often providing better price-performance ratios and specialized optimization for AI workloads.

Emerging Providers: Newer entrants including TensorDock, DataCrunch, and Vast.ai offer competitive pricing and innovative features, though with potentially less mature enterprise support structures.

Technical Integration and Deployment Considerations

Successful GPU rental implementation requires careful consideration of technical integration points, performance optimization, and operational workflows.

Infrastructure Integration Patterns

Hybrid Deployment Models: Many enterprises adopt hybrid approaches, maintaining baseline GPU capacity on-premises while using cloud rental for burst workloads, specialized hardware requirements, or geographic expansion.

Container Orchestration: Kubernetes-based deployments enable portable workloads across different GPU providers, reducing vendor lock-in and enabling cost optimization through provider arbitrage.

Data Pipeline Optimization: Network bandwidth and data transfer costs significantly impact total project costs. Optimizing data pipelines, implementing intelligent caching, and co-locating compute with data sources are crucial for cost-effective deployments.

Performance Optimization Strategies

Multi-Tenancy and Resource Sharing: MIG technology enables efficient resource sharing within organizations, allowing multiple projects to share expensive GPU resources while maintaining performance isolation.

Workload Scheduling and Automation: Implementing intelligent workload scheduling systems that automatically provision, scale, and terminate GPU resources based on demand patterns can reduce costs by 40-60% compared to static allocations.

Framework Optimization: Leveraging GPU-specific optimizations in frameworks like PyTorch, TensorFlow, and specialized libraries such as RAPIDS can dramatically improve performance per dollar.

Future Market Trajectory and Strategic Implications

The global data center GPU market size was estimated at USD 14.48 billion in 2024 and is projected to reach USD 190.10 billion by 2033, growing at a CAGR of 35.8% from 2025 to 2033, indicating sustained demand growth that will continue driving rental market expansion.

Emerging Technology Impact

Next-Generation Architectures: NVIDIA’s H100 and upcoming architectures will likely follow similar rental adoption patterns, with early availability through cloud providers preceding widespread on-premises deployment.

Specialized Workload Acceleration: The emergence of specialized chips for inference, training, and specific AI workloads will create more diverse rental market segments with optimized pricing for different use cases.

Edge Computing Integration: The extension of GPU rental models to edge computing scenarios will enable new deployment patterns for real-time AI applications.

Strategic Recommendations for Enterprise Leaders

Portfolio Approach: Develop a portfolio strategy that combines owned baseline capacity with rental resources for burst demands, specialized requirements, and technology evaluation.

Vendor Diversification: Establish relationships with multiple GPU rental providers to ensure availability, competitive pricing, and risk mitigation.

Cost Management Framework: Implement comprehensive cost monitoring and optimization frameworks that track utilization, performance metrics, and cost per workload across different providers and configurations.

Technology Evaluation Pipeline: Use rental resources for evaluating new GPU architectures, software frameworks, and deployment patterns before making significant capital commitments.

Conclusion

The GPU rental market represents a fundamental shift in enterprise computing strategy, driven by economic efficiency, technological velocity, and workload optimization requirements. For organizations with variable or specialized GPU requirements, rental models provide compelling advantages in cloud cost management, technology access, and operational flexibility.

The NVIDIA A100 ecosystem has established clear performance and compatibility standards that enable confident deployment decisions. Current pricing trends favor rental models for most enterprise workloads, particularly those with utilization rates below 60% or requirements for specialized hardware configurations.

Success in this evolving landscape requires strategic thinking about workload patterns, vendor relationships, and technology evaluation processes. Organizations that develop sophisticated GPU resource management capabilities will gain significant competitive advantages in AI deployment speed, cost efficiency, and technological adaptability.

As the market continues its rapid expansion, early adoption of optimized GPU rental strategies will become increasingly crucial for maintaining technical leadership and operational efficiency in AI-driven business transformation initiatives.

Recent Post

Send this to a friend

Server

Colocation

Server

Colocation CDN

Network

CDN

Network Linux

Cloud Hosting

Linux

Cloud Hosting Kubernetes

Kubernetes Pricing

Calculator

Pricing

Calculator

Power

Power

Utilities

Utilities VMware

Private Cloud

VMware

Private Cloud VMware

on AWS

VMware

on AWS VMware

on Azure

VMware

on Azure Service

Level Agreement

Service

Level Agreement